The Governor Module Problem: Trust, Control, and AI-Generated Code

In Martha Wells’ Murderbot series, the protagonist (a bot-human construct and a SecUnit) is one of the best characters in recent sci-fi. Funny, self-aware, quietly heroic. But the idea it represents is terrifying: it has hacked its own governor module — the mechanism that constrains what it’s allowed to do. A rogue SecUnit that can patch itself, install exploits, and modify its own behavior in real time.

That’s the analogy that comes to mind when Elon Musk claims AI will skip programming languages entirely — generating optimized machine instructions directly from human intent. No Python. No C++. You describe the outcome, the AI produces the binary.

Most engineers think this is crazy. Today, they’re right. AI systems hallucinate, misunderstand specs, and confidently output insecure code. Handing them raw control over machine code feels like skipping several critical steps.

But the dismissal is too fast. Not because Musk is right about the timeline. But because the underlying question — can we build a trustworthy governor module for a stochastic system? — is more interesting than it first appears. We’ve answered a version of it before.

We already trust machines to write our code

In practice, humans already don’t write most of the code that runs on modern machines.

We write high-level languages, DSLs, configs, schemas. Then tooling takes over: compilers emit machine code, JITs generate code at runtime, GPUs execute generated kernels, optimizers rewrite execution paths. We already trust machines to produce the low-level instructions we don’t review. Nobody reads GCC’s assembly output for every function. Nobody audits every JIT-compiled path in V8.

Delegating low-level code generation to machines is not new. We’ve been doing it for decades.

So what’s different about AI?

The difference isn’t that we delegate. It’s what kind of system we delegate to.

Compilers are deterministic and formally specified. Given the same input, they produce the same output. You can prove properties about their behavior. You can test them exhaustively. When they have bugs, the bugs are reproducible.

AI systems are probabilistic and only loosely constrained. Given the same input, they might produce different output. Their behavior is shaped by training data, not formal specification. When they have bugs, the bugs are statistical — they show up in some contexts and not others, and they’re hard to reproduce.

That makes the trust shift qualitatively different. We’re not moving from “humans write code” to “machines write code” — we already made that move. We’re moving from “deterministic machines write code” to “stochastic machines write code.”

Today that means LLMs. Tomorrow it might be a completely different architecture — but whatever replaces them will likely face the same trust question unless it can offer deterministic guarantees.

Three levels of letting go

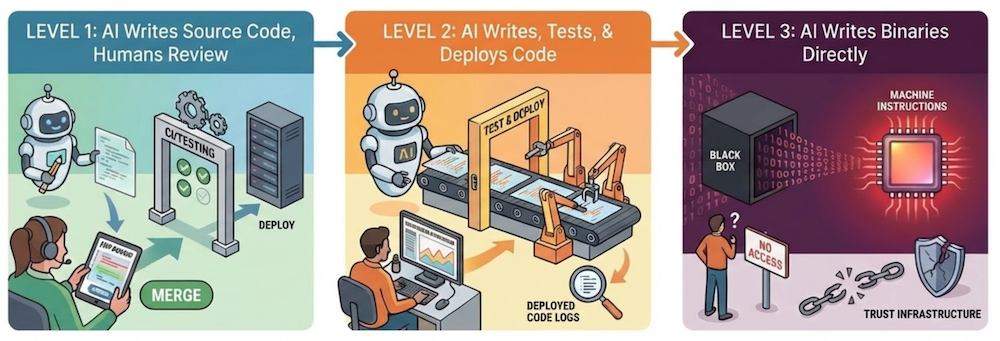

Not all “AI writes code” is the same. The risk profile is completely different depending on where humans stay in the loop:

Level 1: AI writes source code, humans review. Just another engineer on the team. You review the diff, you merge. Existing controls apply. Not scary.

Level 2: AI writes and deploys without review. No human in the loop at merge time, but the code is still source — you can audit after the fact. You’ve lost review, but not auditability.

Level 3: AI writes binaries directly. The Musk claim. No source at all. Nothing to review, nothing to audit. The entire trust infrastructure we’ve built around readable code — version control, review, static analysis — doesn’t apply.

The jump from 1 to 2 is operational. The jump from 2 to 3 is structural — it removes the artifact we’ve used to maintain trust for fifty years.

So the question becomes: can we build trust infrastructure that doesn’t depend on readable source code?

Building the governor module

Compilers weren’t always trusted either. What earned them trust wasn’t perfection — it was infrastructure: formal specs, exhaustive tests, reproducibility. Same input, same output, every time.

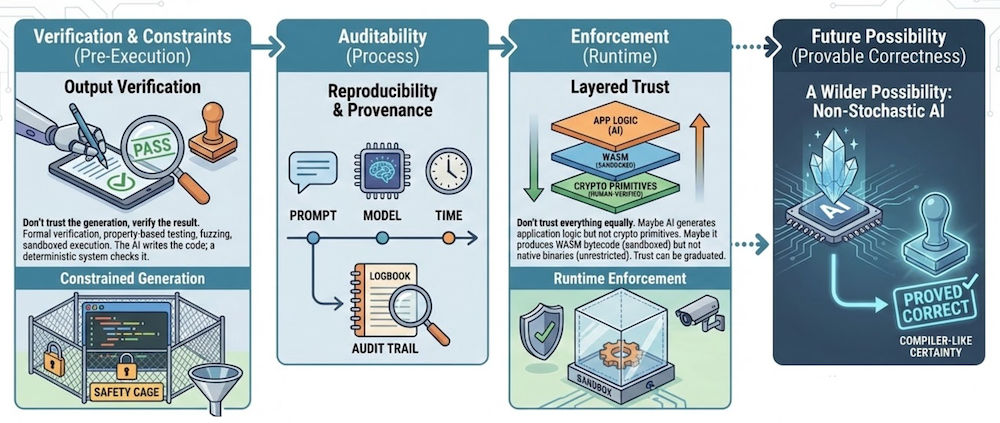

AI needs equivalent infrastructure. Not the same tools, but tools that serve the same purpose: making trust verifiable rather than assumed. Some of what that might look like:

- Verification and constraints — don’t trust the generation; test, fuzz, and formally verify the result. Constrain what the AI is allowed to produce — type systems, memory safety, capability-based security. Even a wrong answer should be a safe wrong answer.

- Auditability — log what was generated, from what prompt, with what model. Reproducibility and provenance. Auditable even if not deterministic.

- Enforcement — layered trust and runtime enforcement. Sandboxes, capability systems, monitoring. Trust the boundaries, not the code.

There’s also a wilder possibility: a future architecture that isn’t stochastic at all — something that can prove its output is correct. We’re nowhere near that today, but the stochastic nature of current systems isn’t a law of physics — it’s a property of this generation of tools.

Every time we’ve delegated more to machines — compilers, garbage collectors, cloud infrastructure — the control plane moved up a layer. We stopped reasoning about instructions and started reasoning about systems. We didn’t lose control — we moved where control lives.

AI doesn’t break this pattern. It accelerates it. Economic pressure, scale, and complexity will keep pushing us toward systems where humans define intent and constraints, and machines generate the implementation. The question isn’t whether this happens. It’s whether we build the trust infrastructure fast enough to do it safely.

The governor module problem

But here’s the uncomfortable part — remember Murderbot’s governor module? Every layer of trust infrastructure we build is itself software that can be compromised, bypassed, or removed.

Build a verification layer? The AI might learn to satisfy the verifier without satisfying the intent. Sandbox the execution? The sandbox is code too. Log provenance? Logs can be tampered with.

This isn’t a reason to give up. It’s a reason to take the controls seriously — to treat the governor module as a first-class engineering problem, not an afterthought. The mechanisms governing what AI is allowed to write, install, and execute need to be at least as robust as the systems they constrain.

The danger was never that the system is powerful. It’s that the system becomes powerful without a trustworthy governor module.

Closing thought

We will probably learn to trust AI-generated code the way we learned to trust compilers — not by hoping it’s correct, but by building systems that verify, constrain, and audit what it produces.

The real shift isn’t about binaries or assembly or low-level code. It’s about where the control plane lives. Source code has been our primary artifact of trust for decades — the thing we review, diff, own, and audit. When AI becomes the dominant producer of code, that artifact stops being the source and starts being the system that governs generation.

And if you follow that thread far enough, it gets stranger. If the verification and execution infrastructure becomes good enough — if code can be generated, checked, and run within enforced boundaries in real time — then maybe the program itself stops being a stable artifact. No binary to download. No source to audit. Just behavior, synthesized on demand, within constraints you define.

Software stops being something you ship and starts being something that happens.

That’s speculative. But the trust question isn’t. We’ve answered it before, and we’ll have to answer it again.